2012 : Understanding the Tipsters, Margin Predictors and Fund Algorithms

Saturday, March 24, 2012 at 3:06PM |

Saturday, March 24, 2012 at 3:06PM |  2 Comments

2 Comments This week brought an e-mail, nestled amongst the spam-fest that is my inbox of recent times, from a visitor to the MAFL site asking about the details of the algorithms used in MAFL. In preparing what I thought would be a brief reply I realised just how scattered are the posts that you'd need to have read and retained to understand all of what goes into the weekly wagers and predictions that appear in this blog. With the increasing volume of new visitors to MAFL I thought it was time to provide all that information in a single blog.

MARS Ratings

One of the key inputs for many of the statistical models used in MAFL is team MARS Ratings. The seminal piece on these Ratings appears in the R2 2008 Newsletter, which you can download from this page. Since then a number of blogs have been written about the features and performance of these Ratings in subsequent years, which you can find by performing a site search using the term "MARS".

MARS Ratings are my attempt to measure team strength, and they seem to do this reasonably well, at least for the purposes of MAFL. These Ratings are based on the ELO system created for rating chess players, which provides a range of tuning parameters through which ratings can be made more or less sensitive to the most recent result and can offer more or less reward for a victory over an opponent of a given rating relative to the victor.

Probability Predictors

Two of the Probability Predictors, WinPred and ProPred, use MARS Ratings as data inputs. They each have their own version of MARS Ratings, which differ in terms of the tuning parameters they use. More details about them appeared in a blog entitled Meet the MARS Family.

To come up with their probability predictions, WinPred and ProPred both take their respective historical MARS Ratings for all games in the previous 12 months and combine them with information about the bookmaker prices in those games, whether or not the game was an interstate clash, and a few other pieces about the participating teams' recent form. All of this information is then modelled via a Conditional Inference Tree forest and the resulting model used to estimate probabilities for future games for which the input values are known.

Another of the Probability Predictors is the Bookie Predictor, for which probability predictions for the Home team are made by dividing the TAB Sportsbet's Away Team head-to-head price by the sum of the Home Team's and the Away Team's Sportsbet prices.

The last of the Probability Predictors is Head-to-Head Unadjusted. It's based on yet another Conditional Inference Tree forest and uses the same inputs as ProPred. The outputs of this modelling algorithm are used, unadjusted, for the probability predictions, while an adjusted version is also used for margin prediction purposes, as discussed below. Adjustment is only required when the probability prediction for the Home team output by the model is more than 25% points greater than that of the Bookie Predictor. In this case the adjusted version of Head-to-Head is to make its probability prediction equal to the Bookie Predictor + 25%.

(Note that there's a subtle, technical difference between the Head-to-Head Unadjusted Probability Predictor and ProPred in that for the former the regressor variable is a 0/1 variable based on whether or not a wager on the Home team was profitable, and for the latter the regressor is a variable that takes on the value 1 if the Home team wins, 0.5 if it draws, and 0 if it loses. Practically, this only makes a difference in drawn games where the Home team was the favourite, in which case a wager on them would have been unprofitable.)

Margin Predictors

A couple of years ago, a fantastic data exploratory tool called Eureqa was launched, which uses genetic algorithms to discover models that explain numeric data (site search on "Eureqa", "Nutonian" or "Formulize" to find posts on MAFL about it). Eureqa - or Formulize as it now is - was used extensively in creating MAFL's Margin Predictors.

Aside from CN1 and CN2, each Margin Predictor was created using Eureqa by feeding it historical data about the probability predictions from a single Probability Predictor. B3 and B7 were built using the Bookie Probability Predictor; P3 and P7 used ProPred; W3 and W7 used WinPred; HU3, HU10, HA3 and HA7 used the Head-to-Head Probability Predictor in its unadjusted and adjusted forms; and C7 used the probability predictions of all the Predictors just discussed. The numbers in each Predictor's name refers to its Complexity as measured by Eureqa. The blog entitled Margin Prediction for 2011 has all the details.

CN1 is one of two neural networks used in MAFL and was built using the equally terrific Tiberius package. This algorithm takes as inputs the probability predictions of Bookie, ProPred, WinPred, and the adjusted and unadjusted forms of Head-to-Head. Its creation is discussed in a blog entitled Introducing MAFL's First Neural Network.

The other neural network is CN2 and it takes as its inputs the MARS Ratings of the participating teams, the TAB Sportsbet prices, and whether or not the game is an interstate clash. I've not much commented on the creation of this Margin Predictor other than in passing in the entry for Round 1 of 2011. The "C" in CN2 is meant to stand for "Combo", and it's only today as I type this that I realise CN2 doesn't really combine anything - at least not in the same sense as C7 or CN1.

Head-to-Head Tipsters

Across the seasons, MAFL has had a huge number of tipsters attempting to do no more than pick the winner. In most years the season-to-season turnover of Head-to-Head Tipsters has been substantial, but 2012 sees the return of the same 13 Tipsters as we saw last season.

BKB is based on the TAB Sportsbet prices, and tips the favourite. Where there's equal-favouritism, BKB tips the team that's higher on the ladder. Pro and Win are based on ProPred and WinPred, and pick the team to which these Predictors assign the higher probability.

The remaining Head-to-Head Tipsters are all heuristic tipsters and were introduced in 2009 (you can download the PDF describing them from this page - select the 2009 - MAFL Tipsters.pdf file) and then updated and slightly tweaked in 2011. The historical performance of the tweaked versions was presented in a blog entitled Heuristics v2.0 - Still Worth Following?. I still find it incredible that Tipsters based on such simpler rules can perform so admirably.

Fund Algorithms

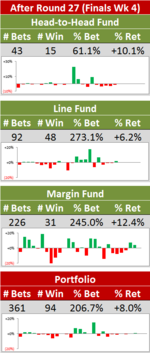

This year we've three Funds operating:

- a Line Fund, which uses its own predictive model, yet another Conditional Inference Tree forest, this one taking as inputs its own MARS Ratings based on its own tuning parameters, the TAB Sportsbet start being offered to the Home team, the participating teams' Venue Experience, the Interstate status of the contest, and information about the recent form of the participating teams

- a Head-to-Head Fund based on the Head-to-Head Probability Predictor described earlier

- a Margin Fund based on the margin predictions of CN2

More details about each of these Funds can be found in the blogs entitled MAFL Funds for 2012 : A Summary and in 2012 : Breaking With Tradition (Just A Little) - Part I and Part II.

Conclusion

I think that's all you need to know, minimally, to completely understand how the information provided in each week's blog post on Wagers & Tips has been derived. For all the nuances, though and to understand the profit and pain of wagering on AFL, I'm afraid you'll need to wade through all the other posts in this journal.

TonyC |

TonyC |

Reader Comments (2)

Great site - only just discovered it and am enjoying reading through the posts and methodology. Given that you use ELO as part of one of your models - have you seen the models built for the previous Kaggle competition to creating chess rating models? I had not joined Kaggle when the competition was on so am not too familiar with the models that did well - but it would be interesting to see how those models go compared to an ELO.

G'day Stephen,

Thanks for your comment. It's always nice to hear from a visitor and I'm pleased that you're enjoying the site.

Re the Kaggle competition you mentioned, I discovered this interesting paper from the guy who won it (http://blog.kaggle.com/wp-content/uploads/2011/02/kaggle_win.pdf), which does give me some ideas about how to improve MAFL MARS Ratings. The overfitting issue to which he alludes is particularly apt in an AFL context. I've dealt with that in a fairly clunky way by capping the maximum winning margin at 78 points, but more robust approaches might well do a better job.

The notion of a decay function is also appealing. I've been meaning to do some analysis about the extent to which perturbing the results of, say, a single earlier game changes the current Rating of all teams and to measure the extent to which these effects dissipate over time.

I'm sensing some off-season homework ...,

Thanks again,

TC